Anyway, something like:

In standard set notation:

A = {a1,a2,a3,ab,ac,abc}

B = {b1,b2,b3,ab,bc,abc}

C = {c1,c2,c3,ac,bc,abc}

And in BKO:

-- NB: coeffs for set elements are almost always in {0,1}

|A> => |a1> + |a2> + |a3> + |ab> + |ac> + |abc>

|B> => |b1> + |b2> + |b3> + |ab> + |bc> + |abc>

|C> => |c1> + |c2> + |c3> + |ac> + |bc> + |abc>

Now some simple examples of intersection (union is trivial/obvious/boring):

sa: intersection(""|A>, ""|B>)

|ab> + |abc>

sa: intersection(""|A>, ""|C>)

|ac> + |abc>

sa: intersection(""|B>, ""|C>)

|bc> + |abc>

sa: intersection(""|A>,""|B>,""|C>)

|abc>

Now, let's observe what happens when we add our sets:

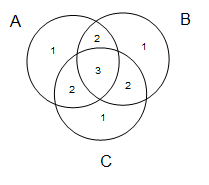

a: "" |A> + "" |B> + "" |C> |a1> + |a2> + |a3> + 2.000|ab> + 2.000|ac> + 3.000|abc> + |b1> + |b2> + |b3> + 2.000|bc> + |c1> + |c2> + |c3>Since our sets only have coeffs of 0 of 1, then when we add sets, the coeff of each ket corresponds to how many sets that object is in (cf. the Venn diagram above). This is kind of useful. Indeed, even if we add "non-trivial" superpositions (ie, those with coeffs outside of {0,1}), the resulting coeffs are also interesting. Anyway, an example of that later!

Next thing I want to add is the idea of a "soft" intersection. The motivation is that intersection is close, though maybe not exactly, what happens when a child learns. Say each time a parent sees a dog, they point and say to their child "dog". Perhaps mentally what is going on is that each instance of this, the child does a set intersection of everything in mind at the time the child hears "dog". OK. Works kind of OK. But what happens if the parent says dog and there is no dog around? Standard intersection is brutal, one wrong example in the training set and suddenly the learnt pattern becomes the empty set! And hence the motivation for the idea of a "soft intersection".

-- this is similar to standard intersection:

sa: drop-below[3] (""|A> + ""|B> + ""|C>)

3.000|abc>

-- clean is a sigmoid that maps all non-zero coeffs to 1.

-- this is identical to standard intersection (at least for the case all coeffs are 0 or 1):

sa: clean drop-below[3] (""|A> + ""|B> + ""|C>)

|abc>

-- now consider this object:

sa: drop-below[2] (""|A> + ""|B> + ""|C>)

2.000|ab> + 2.000|ac> + 3.000|abc> + 2.000|bc>

-- and finally, here is our soft intersection:

sa: clean drop-below[2] (""|A> + ""|B> + ""|C>)

|ab> + |ac> + |abc> + |bc>

So the assumed general pattern for learning is:

-- strict intersection version: pattern |object: X> => common[pattern] (|object: X: example: 1> + |object: X: example: 2> + |object: X: example: 3> + |object: X: example: 4> + |object: X: example: 5> + ... ) -- softer intersection version: pattern |object: X> => drop-below[t0] (drop-below[t1] pattern |object: X: example: 1> + drop-below[t2] pattern |object: X: example: 2> + drop-below[t3] pattern |object: X: example: 3> + drop-below[t4] pattern |object: X: example: 4> + drop-below[t5] pattern |object: X: example: 5> + ... )For some set of parameters {t0, t1, t2, ...}

And I guess that is it for now.

No comments:

Post a Comment